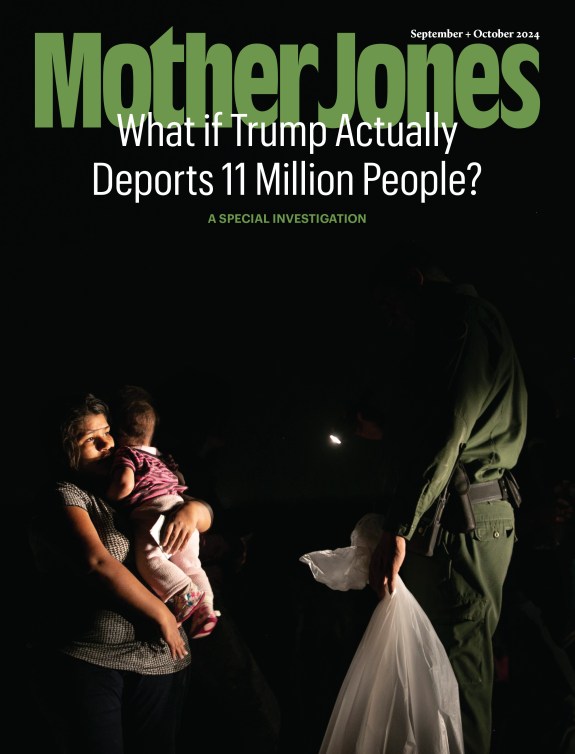

A vigil three days after a gunman livestreamed his murder of at least 50 people at two New Zealand mosques.PJ Heller/ZUMA Wire

By Saturday night, Facebook said it had removed 1.5 million videos depicting the deadly mass shooting in New Zealand that had taken place roughly 24 hours earlier. The videos were copies of an original livestream of the killings that the shooter broadcast via the site, which was removed by the company about 20 minutes after it was first loaded.

“Our hearts go out to the victims, their families and the community affected by the horrific terrorist attacks in Christchurch,” said executive Chris Sonderby in a post on Facebook’s public relations site. “We continue to work around the clock to prevent this content from appearing on our site, using a combination of technology and people.”

The livestream of the shootings, which resulted in the deaths of 50 people gathered at Christchurch mosques, and its wide copying brought unprecedented attention to tech giants’ abilities to grapple with violent content, especially in real time. But it was far from the first time the issue has surfaced—or that tech platforms have said they needed to do a better job preventing the spread of such video.

While YouTube did not release an exact count of the number of copies of the shooter’s video it took down, it described the moment as an opportunity for improvement: “Every time a tragedy like this happens we learn something new, and in this case it was the unprecedented volume” of videos, Neal Mohan, YouTube’s chief product officer, told the Washington Post. “Frankly, I would have liked to get a handle on this earlier.”

Live streaming of violence on tech platforms can be horrifying, but is not always intentional; it has also been used in some cases to help solve crimes or bring accountability to police who use excessive force. But as the New Zealand shooter demonstrated, online platforms can also be weaponized to glorify and spread violence.

While tech companies have expressed renewed efforts to prevent future content like the New Zealand livestream, fixing their technology might be easier said than done. Between July and September of 2018, Facebook reports that it took down 15.4 million pieces of violent and graphic content. A large percentage of those removals rely on automated systems, while livesteams can be much more difficult to monitor.

“There are not enough moderators in the world to monitor every live stream,” Sarah T. Roberts, a professor at UCLA who has been researching content-moderation strategies for nearly a decade, told the New Yorker. “Mainstream platforms are putting resources into moderation, but it’s a bit like closing the barn door after the horses have gotten out.”

Some U.S. lawmakers are hoping increased scrutiny will bring greater pressure on the companies. On Tuesday, Rep. Bennie Thompson (D-Miss.), who chairs the House Homeland Security Committee, called for Twitter, YouTube, Facebook, and Microsoft to testify on their responses to the New Zealand attack.

Here’s a timeline of earlier cases where violent footage has found a publisher in social media platforms:

In 2015, a disgruntled broadcast news reporter killed two former coworkers outside of Roanoke, Virginia while they were filming live, uploading his own videos to Facebook and Twitter and documenting his actions in real time. Both Facebook and Twitter removed the shooter’s account and footage for violating its user policies, but for hours users who had auto-play enabled were subjected to the violence in their feeds.

February 27, 2016

An 18-year-old girl in Ohio livestreamed the rape of her 17-year-old friend on Periscope, an app owned by Twitter. Twitter declined to comment on the matter.

December 30, 2016

On December 30, 2016 a 12-year-old girl livestreamed her suicide on an app named Live.Me. The footage quickly found its way onto both YouTube and Facebook. In Facebook’s case, it took the company two weeks to get the video down.

According to a Daily Dot article, her death was one of a number of increasing suicide livestreams on Facebook. When another teenage girl livestreamed her suicide less than a month later, a Facebook spokesperson told the Miami Herald that the company aimed “to interrupt these streams as quickly as possible when they’re reported to us,” and pointing out a tool allowing users to “report violations during a live broadcast”

April 18, 2017

A Cleveland man posted a video of a murder that stayed up for two hours before Facebook took it down. While the murder wasn’t livestreamed, according to an NBC report, a video expressing his intent to commit it and a confession were livestreamed before and after. In a statement, CEO Mark Zuckerberg admitted that “we have a lot of work—and we will keep doing all we can to prevent tragedies like this from happening.” Justin Osofsky, a vice president at the company, told NBC News that Facebook was “reviewing our reporting flows.”

April 25, 2017

Later that month, in Thailand, a 20-year-old man livestreamed himself murdering his infant daughter and then committing suicide.“This is an appalling incident and our hearts go out to the family of the victim,” a Facebook spokesman said. “There is absolutely no place for acts of this kind on Facebook and the footage has now been removed.”

April 12, 2018

Rannita Williams was shot to death by an ex-boyfriend while he streamed the murder on Facebook Live. The video was removed the same day.

Listen to Mother Jones reporters Ali Breland, Mark Follman, and Pema Levy describe the ways in which giant tech companies have proven themselves unable or unwilling to stop the spread of hate speech on their platforms, and became unwitting allies of the Christchurch shooter, on this week’s episode of the Mother Jones Podcast: